Nvidia’s latest initiative, the open-sourcing of the KAI Scheduler, marks a transformative moment in the AI infrastructure landscape. By making this resource available under the Apache 2.0 license, Nvidia not only showcases its commitment to enhancing enterprise AI solutions but also promotes a culture of collaboration within the tech community. This move creates a unique dichotomy: blending the rigorous standards of enterprise AI with the freedom and inclusivity that open-source platforms provide. Through this initiative, Nvidia aims to cultivate a thriving ecosystem where developers and researchers contribute, exchange ideas, and push the boundaries of AI technology.

Addressing the Shortcomings of Traditional Resource Schedulers

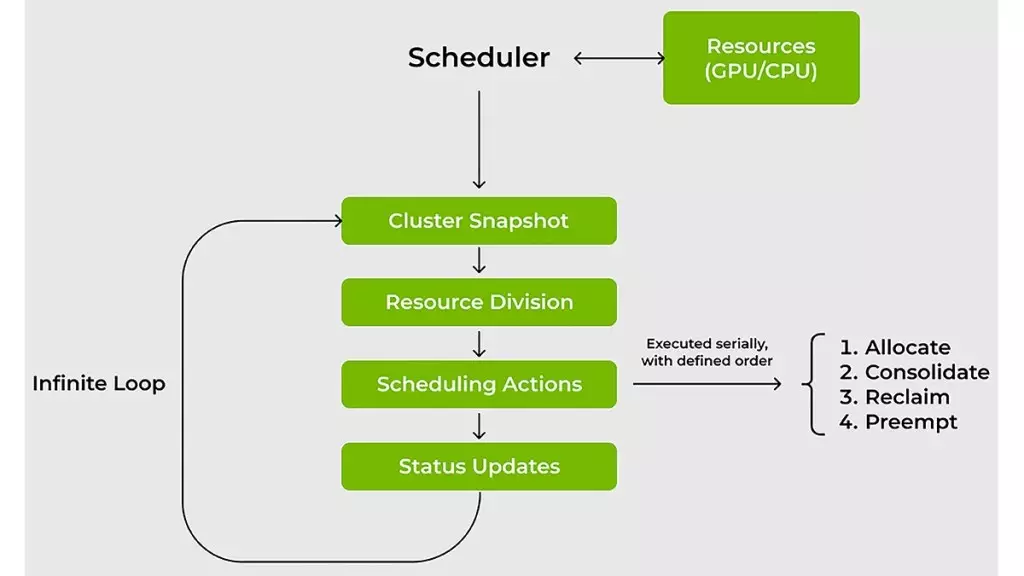

One of the most significant hurdles in managing AI workloads has been the limitations of traditional resource scheduling. Conventional schedulers often struggle to accommodate the dynamic and unpredictable nature of AI workloads, leading to inefficiencies and wasted computational resources. The KAI Scheduler, developed specifically to tackle these shortcomings, stands out with its agile and responsive design that recalibrates resource allocations in real-time. This flexibility ensures optimal GPU utilization, reflecting Nvidia’s understanding of the evolving landscape of machine learning needs.

This adaptive approach to scheduling is revolutionary for IT and ML teams who frequently encounter fluctuating demands. For instance, research tasks may require minimal compute power initially, while extensive training sessions could suddenly necessitate large GPU clusters. The KAI Scheduler effectively mitigates these challenges by recalibrating quotas and limits dynamically, reducing the burden on administrators and allowing them to focus on innovation rather than resource management.

Time is of the Essence: Speeds That Matter

In the fast-paced world of machine learning, every minute counts. Traditional scheduling can lead to delays, frustrating engineers eager to push their projects forward. The KAI Scheduler alleviates this by integrating sophisticated techniques like gang scheduling and hierarchical queuing. This combination allows teams to submit multiple jobs at once while maintaining priority fairness. Instead of micromanaging resource access, developers can step back and rely on the scheduler’s instinctive capabilities.

Moreover, the scheduler’s efficacy lies in its zero-tolerance approach to underutilization. By employing smart strategies such as bin-packing and workload spreading, it maximizes compute efficiency. This not only minimizes resource fragmentation but also ensures that workspaces are optimally filled, maximizing resource availability across nodes. The KAI Scheduler solves the conundrum of resource excess that often plagues shared clusters, where a select few monopolize GPUs at off-peak times, leading to inefficiencies for others.

Bridging the Gap Between AI Frameworks and Workloads

As the AI community diversifies its toolkit, effective integration of various AI frameworks becomes essential. Navigating the intricate web of tools such as Kubeflow, Ray, and Argo can be daunting, resulting in development headaches and prolonged project timelines. The KAI Scheduler simplifies this complexity with its integrated podgrouper, which automatically connects workloads with the required frameworks. This feature drastically cuts down on manual configuration time, enabling teams to prototype faster and more efficiently. By addressing these integration challenges, Nvidia is not just providing a scheduler; it is fostering an environment conducive to creative experimentation.

Empowering AI Practitioners with Resource Guarantees

A distinctive feature of the KAI Scheduler is its enforcement of resource guarantees. This aspect is vital in a shared computing environment, where competition for limited resources can quickly escalate. By ensuring that allocated GPUs are available when needed, while simultaneously reallocating idle resources to prevent wastage, the KAI Scheduler promotes a culture of fairness and efficiency. This proactive approach not only serves individual teams but enhances overall cluster performance, making it a game-changer in AI resource management.

Nvidia’s KAI Scheduler stands as a beacon of advancement in AI infrastructure. By opening its doors to the open-source community while addressing critical pain points faced by ML engineers, this innovative solution offers a promising pathway toward a more collaborative, efficient future in AI development. As the line between enterprise solutions and open-source creativity continues to blur, Nvidia is leading the charge into a promising new era of possibilities.