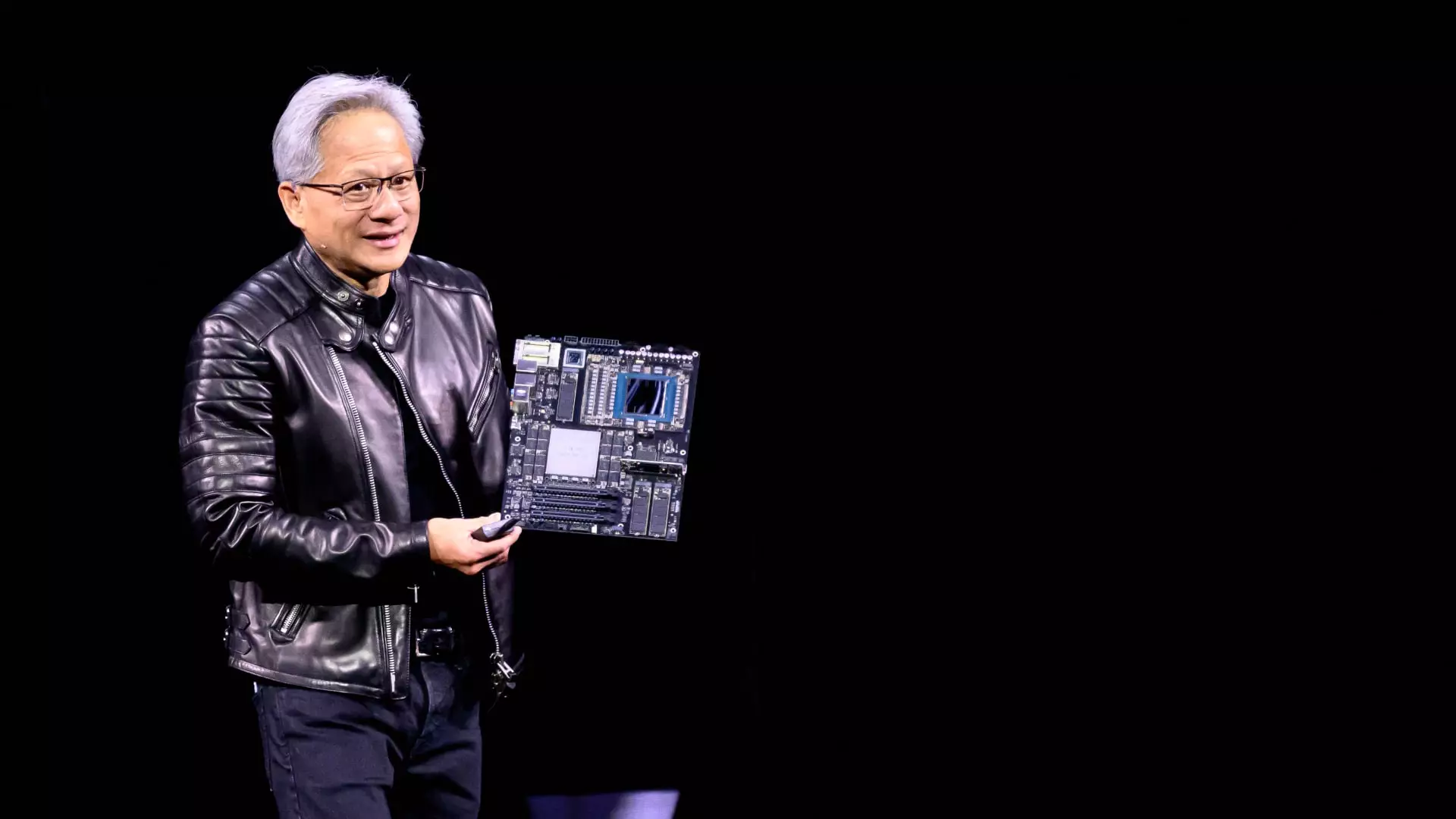

Nvidia’s CEO Jensen Huang recently delivered a compelling speech at the GPU Technology Conference (GTC), one that was as unscripted as it was insightful. In a world increasingly dominated by artificial intelligence, Huang’s primary message was unequivocal: to leverage the most advanced chips available in order to meet evolving technological demands. This isn’t merely about staying ahead; it’s about reshaping the future of AI infrastructure itself. The rallying cry for “faster chips” resonates deeply in an era where speed is integral not just to efficiency but to innovative potential.

The manner in which Huang lays out his argument sets him apart as a visionary. His assertion that improved speeds would alleviate concerns surrounding cost and return on investment strikes at the heart of AI development. Huang emphasizes that performance improvements over the next decade will redefine cost structures in a landscape where the rapid execution of complex tasks can yield tremendous economic benefits. In speaking with journalists post-keynote, Huang declared, “Speed is the best cost-reduction system.” Such statements signal a robust strategy rooted in the profound belief that technological enhancement and economic viability can coexist, if not thrive together.

Breaking Down the Economic Model

Huang took the stage not just to announce new products but to educate his audience on the financial implications of these advancements. By focusing on the economics of his company’s cutting-edge chips, he brought to light a critical metric: cost-per-token—the expense incurred for producing a single output of AI. By providing real-time calculations, Huang reassured clients that their investments would yield substantial returns, even in the face of skyrocketing AI infrastructure costs.

This angle is especially crucial as investors cast wary glances towards the major cloud service providers—Microsoft, Google, Amazon, and Oracle. Concerning their capital spending habits on AI, Huang underscored that cloud providers, spurred by a tightening budgetary environment, are on the verge of pouring hundreds of billions into AI-centric infrastructure. If these providers adapt quickly, it could create an unparalleled synergy between demand for speed and supply of advanced technologies.

Additionally, the Blackwell Ultra systems are poised to revolutionize profitability for data centers. Nvidia’s projections suggest that these systems could generate up to fifty times more revenue than their predecessors, and such assertions further solidify Huang’s position as a leading figure in the AI landscape. This victory for Nvidia speaks volumes about their potential to redefine how businesses engage with AI technologies.

The Impending Tech Landscape

Part of Huang’s unfiltered speech was dedicated to outlining Nvidia’s ambitious roadmap through 2028, introducing concepts like Rubin Next and Feynman AI chips. This strategic foresight is essential, as it directly addresses the pressing concerns of cloud clients who are already strategizing their future investments. Huang’s confident declaration that major infrastructure initiatives amounting to “several hundred billion dollars” are on the horizon clearly illustrates the massive economic forces in motion and Nvidia’s critical role within this ambit.

What really sets Nvidia apart, according to Huang, is the flexibility and adaptability of their GPUs, especially when compared to custom-built ASICs from other cloud providers. While the tech industry is rife with custom chip projects aimed at providing tailored solutions, Huang’s skepticism about their viability shines through. His assertion that custom chips must outperform established solutions to make a dent in the market speaks volumes about Nvidia’s self-assured place in the grander tech ecosystem.

Nvidia’s Aspirational Edge

Huang leads with a question that challenges both potential competitors and investors: “What do you want for several $100 billion?” This bold rhetoric captures the essence of Nvidia’s overarching strategic vision. It’s not merely about creating better chips; it’s about positioning Nvidia as the backbone of future AI developments. His mindset reflects an ambitious intention to dominate the field by ensuring that substantial investments are inevitably directed towards Nvidia’s offerings.

By effectively merging technical innovations with economic prudence, Nvidia is setting itself up not just as a participant in the AI race, but as a definitive leader. As the landscape evolves, the company’s commitment to creating faster, more powerful chips will likely continue to elevate its status, making it indispensable to cloud providers and investors alike. In a world increasingly reliant on AI, Nvidia is undeniably centered in the conversation of future technologies.